Sound of Touch: Active Acoustic Tactile Sensing via String Vibrations

- Xili Yi1

- Ying Xing1

- Zachary Manchester2

- Nima Fazeli1

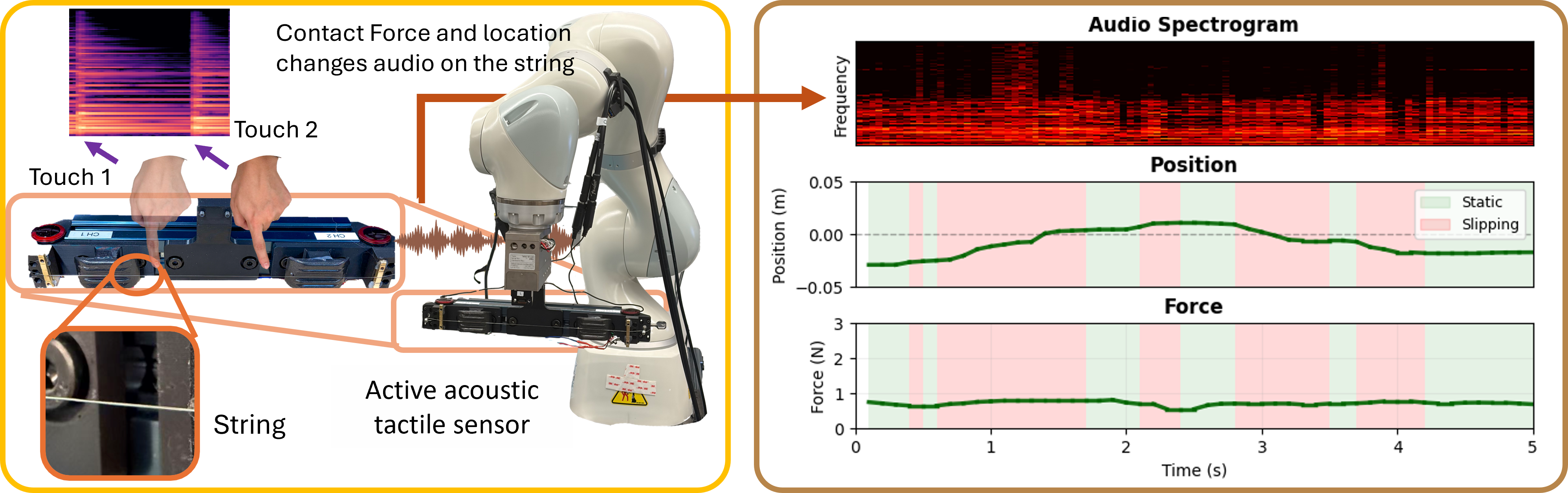

TL;DR: We treat a continuously excited vibrating string as an active acoustic tactile sensor, and use short two-channel audio windows to infer contact location, contact force, and slippage in real time.

Experiment Video

Abstract

Distributed tactile sensing is hard to scale over large robot surfaces because dense arrays increase wiring, cost, and fragility. Sound of Touch is an active acoustic tactile sensing method that uses continuously excited tensioned strings as sensing elements. Two contact microphones observe spectral changes caused by contact. From short-duration audio, the system estimates contact location and normal force, and detects slip. A physics-grounded string vibration simulator explains how contact position and force shift vibration modes. Experiments show millimeter-scale localization, reliable force estimation, and real-time slip detection.

Method Overview

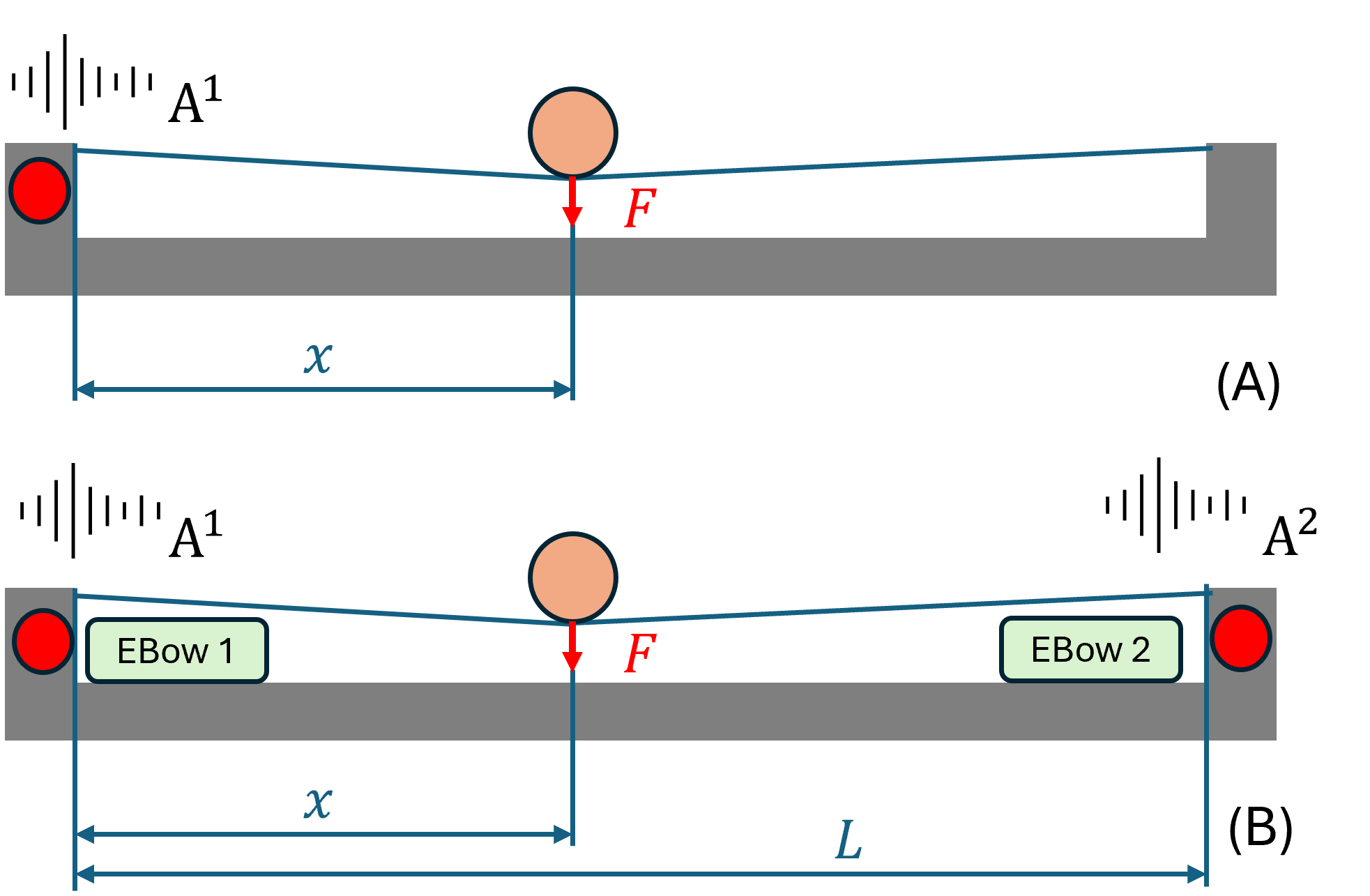

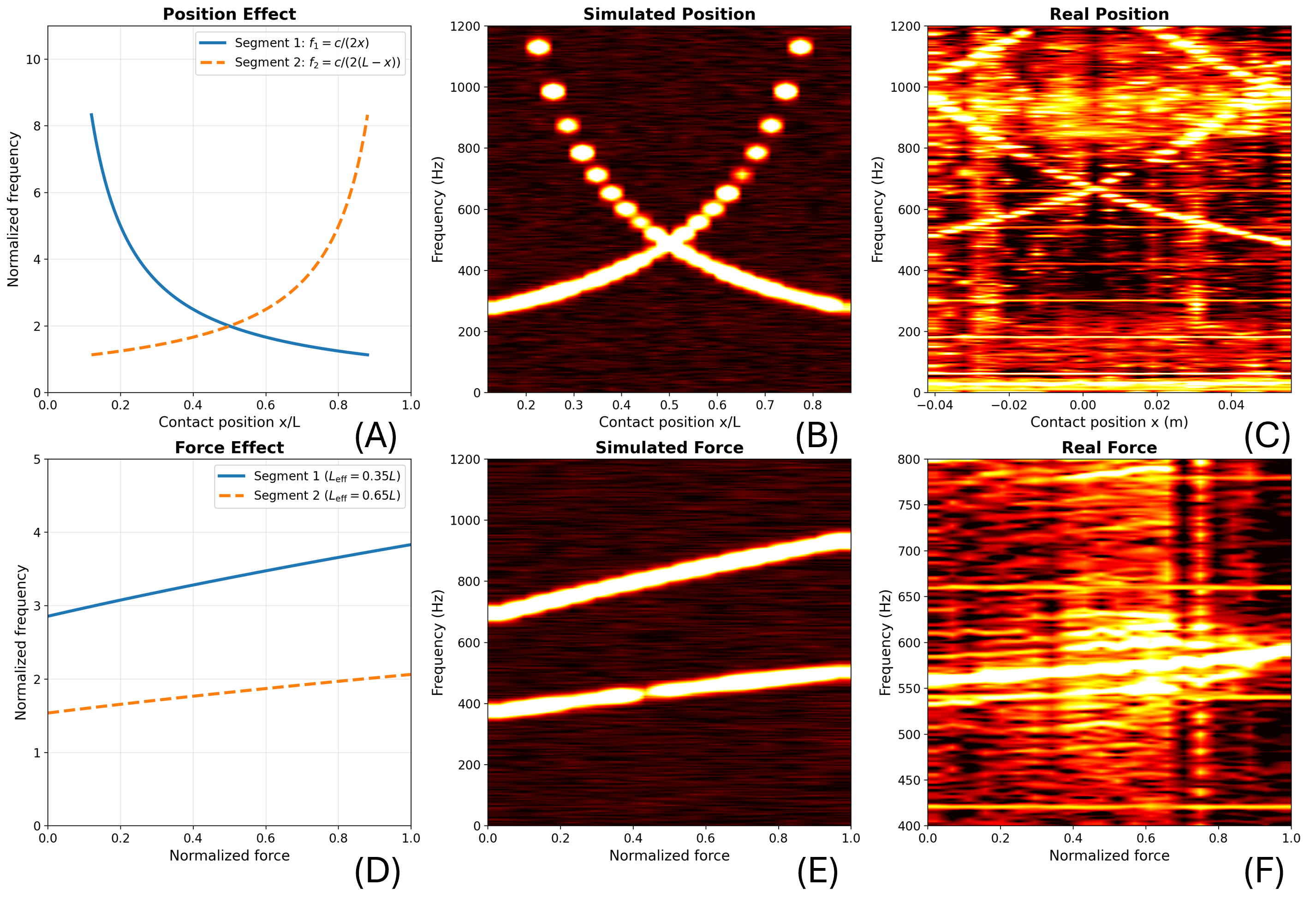

The sensor uses a steel string, dual EBow-style electromagnetic drivers for continuous excitation, and dual contact microphones. Contact at position x and force F changes effective string length and tension, producing structured resonance shifts. A split-string simulator predicts these trends and supports design analysis, while a real-world inference model maps audio to contact state tuple (contact, location, force, slip).

Concept design and sensing principle

Contact-induced resonant frequency shifts

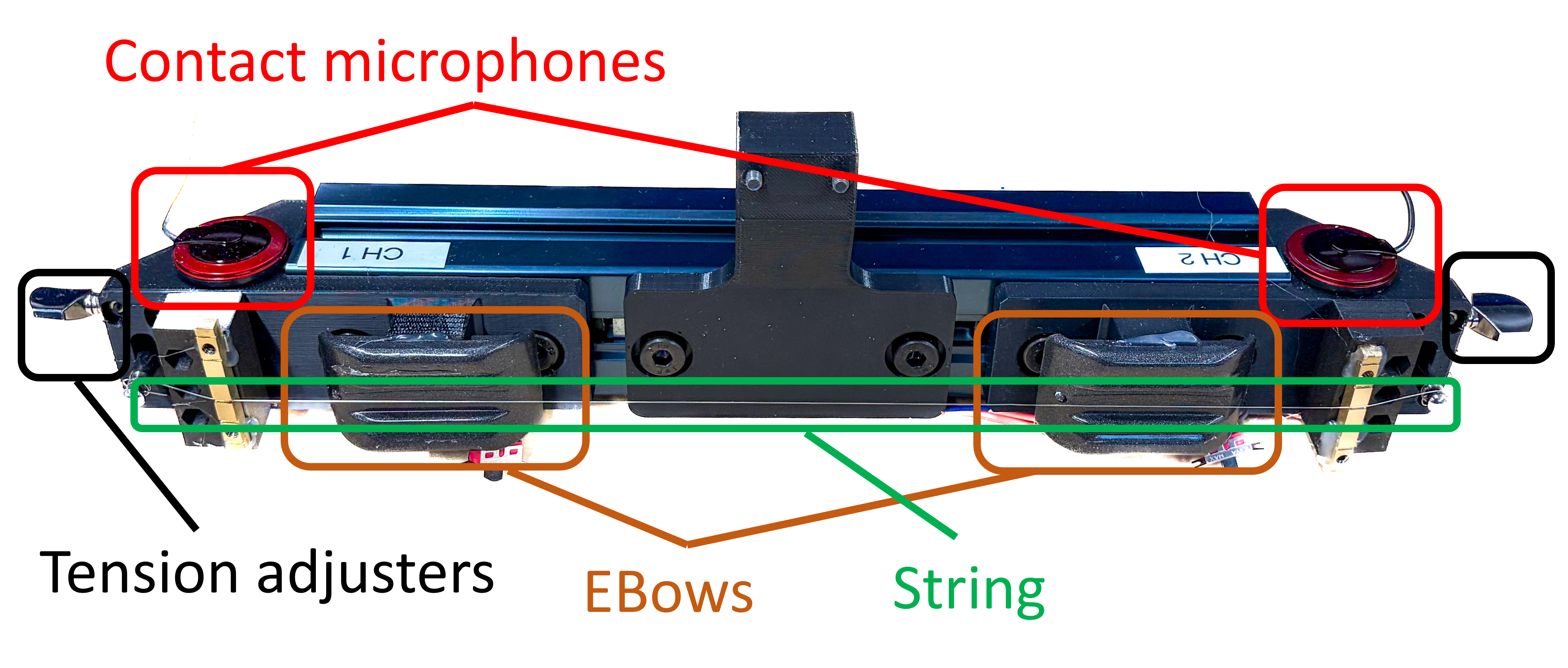

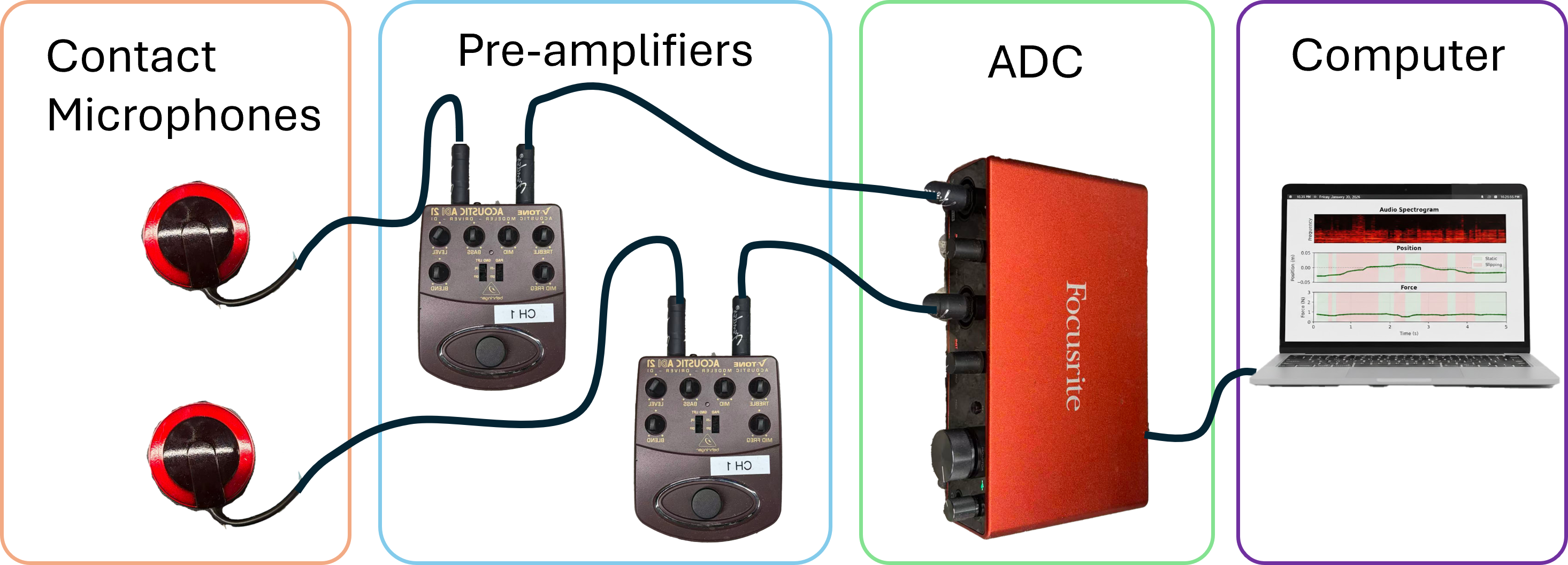

Hardware and System

The prototype is built on an aluminum frame with guitar hardware and a 10 cm sensing range. Microphone signals are independently amplified and streamed through a Focusrite Scarlett 4i4 interface at 44.1 kHz using a low-latency JACK setup for real-time inference.

Sensor hardware

Audio acquisition pipeline

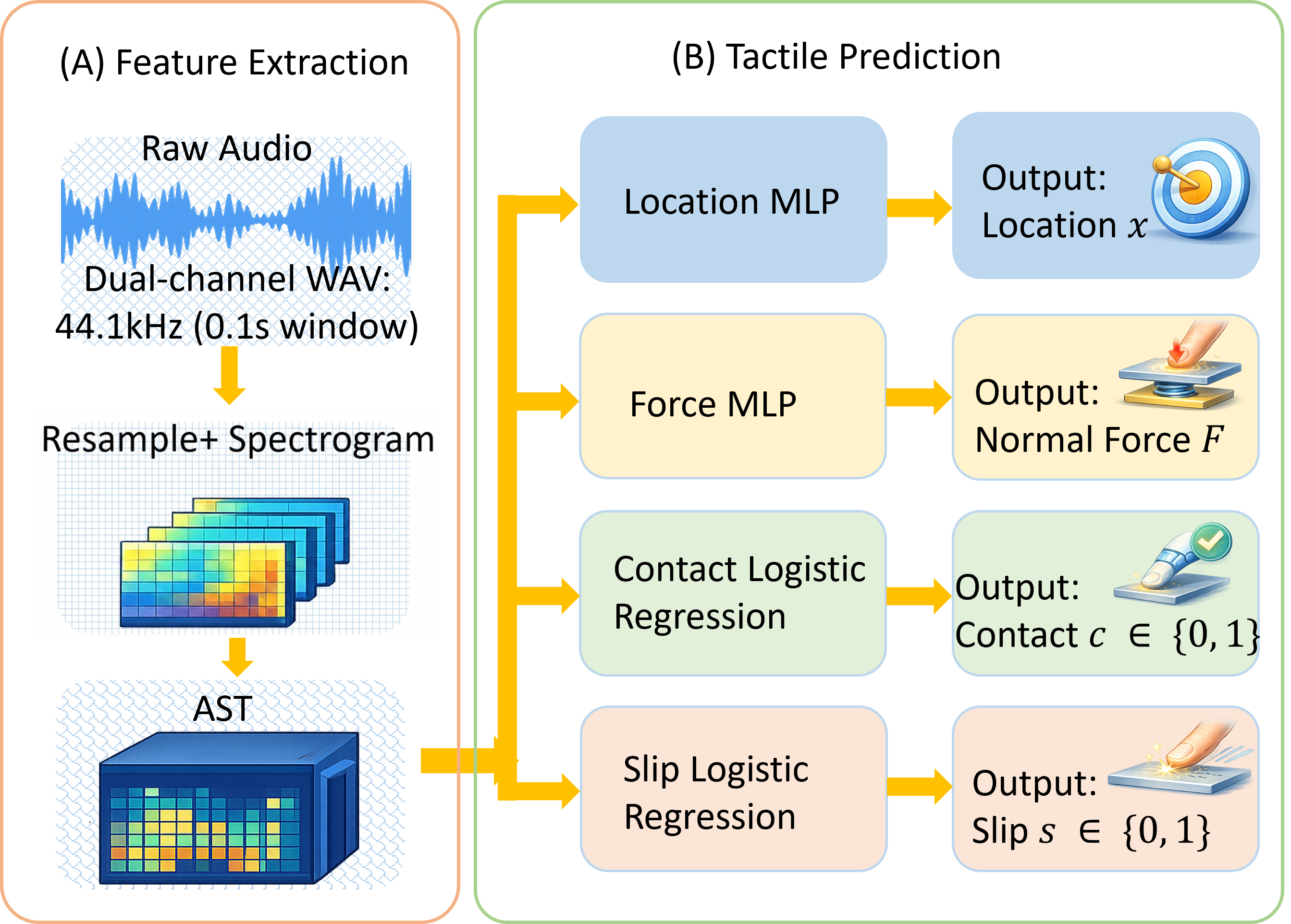

Learning Pipeline

Audio windows are transformed into spectral inputs and encoded using a frozen Audio Spectrogram Transformer (AST). Lightweight task-specific heads estimate contact and slip (binary classification), and location and force (regression). The model is optimized jointly with masked losses so regression is trained only when contact is present.

Results

Contact and slip classification reached 100% accuracy on the evaluated test set. Detailed regression metrics are shown below.

Contact Location Estimation

| Object | MAE (mm) | <=5 mm (%) | Pearson r | Condition |

|---|---|---|---|---|

| Plastic | 2.7 | 87.8 | 0.994 | Clean |

| Plastic | 3.1 | 83.3 | 0.994 | Noise |

| Wood | 8.8 | 51.4 | 0.863 | Clean |

| Wood | 8.6 | 55.7 | 0.857 | Noise |

| Metal tube | 5.4 | 70.0 | 0.952 | Clean |

| Metal tube | 8.0 | 57.1 | 0.893 | Noise |

| Allen Key | 2.7 | 82.9 | 0.993 | Clean |

| Allen Key | 2.8 | 87.1 | 0.992 | Noise |

Contact Force Estimation

| Object | MAE (N) | <=0.2 N (%) | Pearson r | Condition |

|---|---|---|---|---|

| Plastic | 0.141 | 74.4 | 0.909 | Clean |

| Plastic | 0.131 | 75.6 | 0.928 | Noise |

| Wood | 0.111 | 84.3 | 0.903 | Clean |

| Wood | 0.115 | 82.9 | 0.927 | Noise |

| Metal tube | 0.172 | 70.0 | 0.908 | Clean |

| Metal tube | 0.173 | 65.7 | 0.906 | Noise |

| Allen Key | 0.167 | 67.1 | 0.844 | Clean |

| Allen Key | 0.165 | 65.7 | 0.819 | Noise |

Contact

For questions, contact Xili Yi.